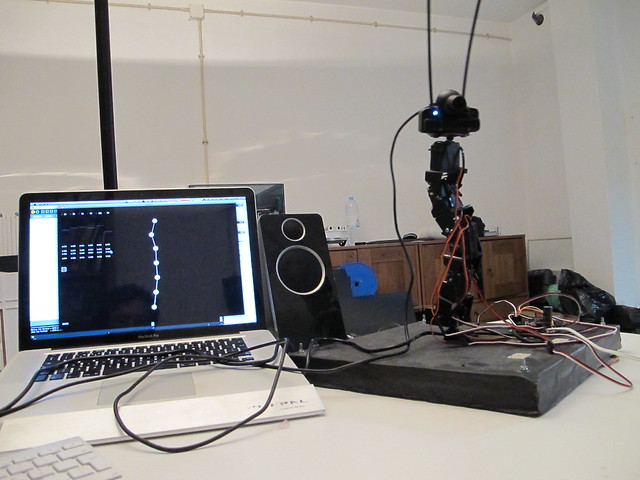

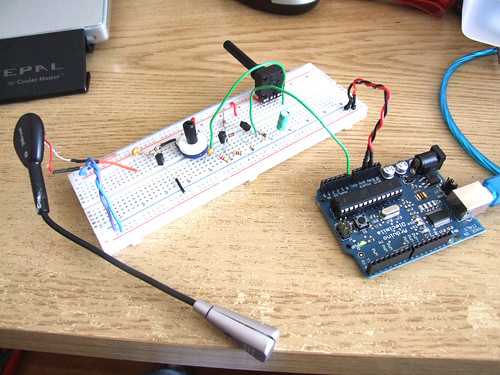

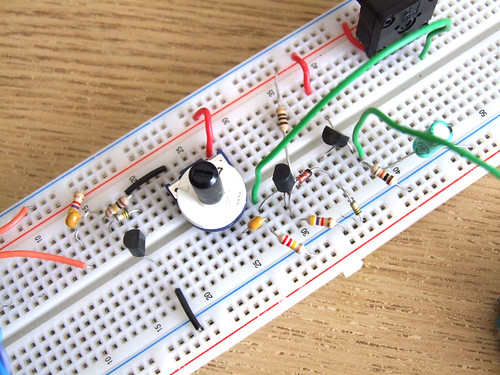

It has been a long time since I wanted to control the arduino with processing and I tried a lot of libraries, and a lot of processes and I always felt that none of those were suited for what I needed. I needed something simple to implement and easy to understand, and because I am not a programmer, I asked for help and @pauloricca replied to me with a quick, fast and really good solution.

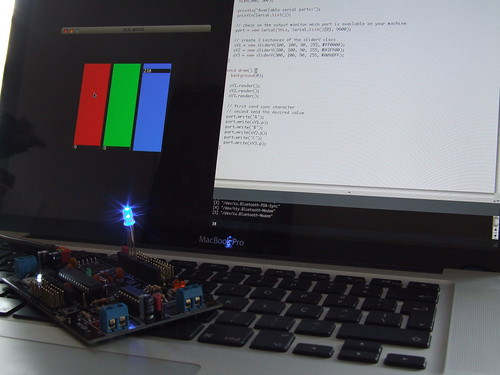

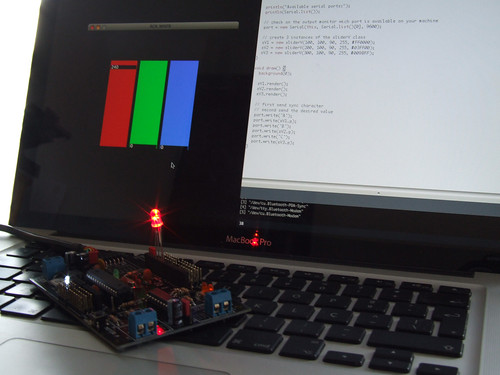

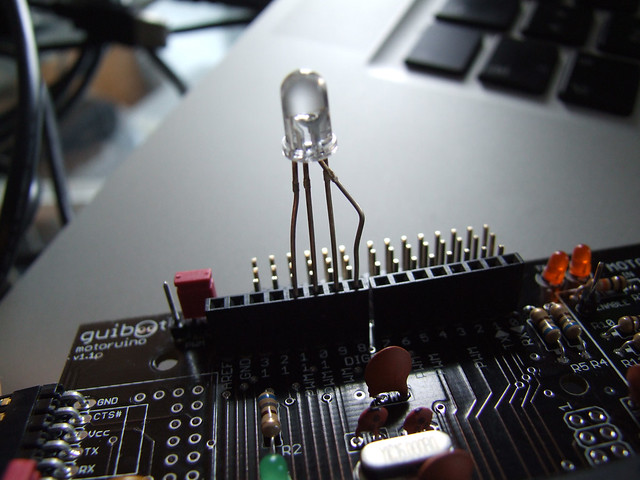

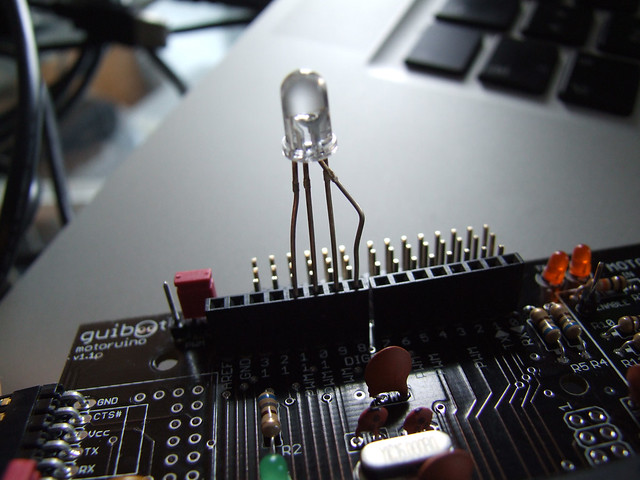

In this example I have connected an RGB LED to the Arduino and on Processing we will have a simple mixer to fade in and out color channels. DON’T DO THIS, LED’s always need to have one resistor in series before. In this case I just wanted to show the serial communication part, and I skipped the resistor part, lazy me! Never do this, otherwise you will kill your leds very fast.

On the Arduino side, I defined 3 digital output pins 9, 10, 11, these are PWM capable pins. Than I defined pin 8 as an OUTPUT, and digitallyWrite it to LOW, to be the GROUND pin. On the loop() function we used a switch() function that detects when the sync characters ’R’, ‘G’ and ‘B’ are received. These characters will tell us what value is coming next. The function GetFromSerial() is called everytime we need to read a value from the serial port.

void setup()

{

// declare the serial comm at 9600 baud rate

Serial.begin(9600);

// output pins

pinMode(9, OUTPUT); // red

pinMode(10, OUTPUT); // green

pinMode(11, OUTPUT); // blue

// another output pin o be used as GROUND

pinMode(8, OUTPUT); // ground

digitalWrite(8, LOW);

}

void loop()

{

// call the returned value from GetFromSerial() function

switch(GetFromSerial())

{

case 'R':

analogWrite(9, GetFromSerial());

break;

case 'G':

analogWrite(11, GetFromSerial());

break;

case 'B':

analogWrite(10, GetFromSerial());

break;

}

}

// read the serial port

int GetFromSerial()

{

while (Serial.available()<=0) {

}

return Serial.read();

}

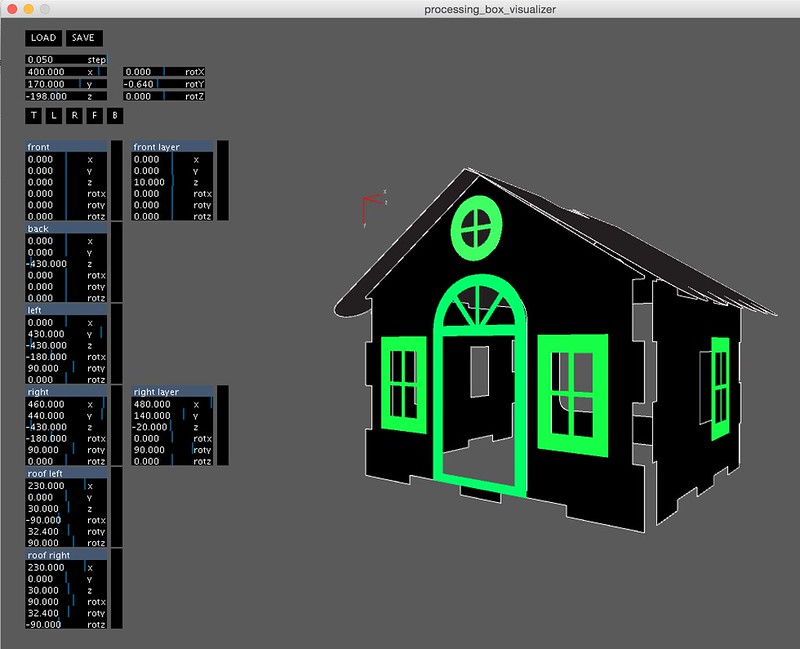

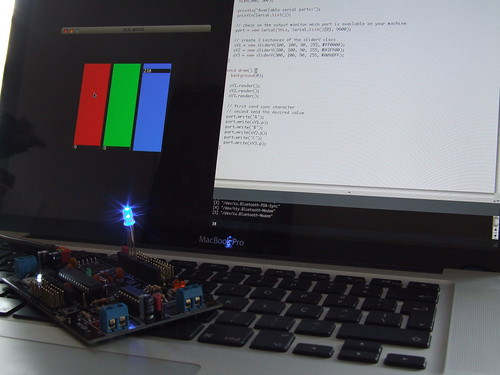

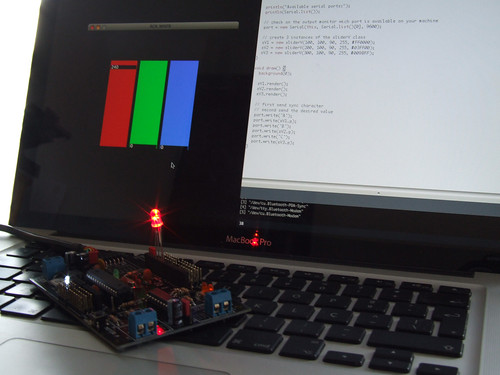

On the Processing side, I am using a slider class adapted from anthonymatox.com, and I created 3 instances of this class (I assume you understand the concept of class). The important thing to understand here is the import of the Serial library, and the creation of a Serial object called “port”. On the setup() function I print out the available serial ports and than I defined which one is the Arduino port, on my case is the number 0, note that I am using mac, if you are using PC it should be COM1, COM2 or another COM#. Finally I am passing the values of the slider after I pass the sync character ‘R’, ‘G’, ‘B’.

import processing.serial.*;

Serial port;

sliderV sV1, sV2, sV3;

color cor;

void setup() {

size(500, 500);

println("Available serial ports:");

println(Serial.list());

// check on the output monitor wich port is available on your machine

port = new Serial(this, Serial.list()[0], 9600);

// create 3 instances of the sliderV class

sV1 = new sliderV(100, 100, 90, 255, #FF0000);

sV2 = new sliderV(200, 100, 90, 255, #03FF00);

sV3 = new sliderV(300, 100, 90, 255, #009BFF);

}

void draw() {

background(0);

sV1.render();

sV2.render();

sV3.render();

// send sync character

// send the desired value

port.write('R');

port.write(sV1.p);

port.write('G');

port.write(sV2.p);

port.write('B');

port.write(sV3.p);

}

/*

Slider Class - www.guilhermemartins.net

based on www.anthonymattox.com slider class

*/

class sliderV {

int x, y, w, h, p;

color cor;

boolean slide;

sliderV (int _x, int _y, int _w, int _h, color _cor) {

x = _x;

y = _y;

w = _w;

h = _h;

p = 90;

cor = _cor;

slide = true;

}

void render() {

fill(cor);

rect(x-1, y-4, w, h+10);

noStroke();

fill(0);

rect(x, h-p+y-5, w-2, 13);

fill(255);

text(p, x+2, h-p+y+6);

if (slide==true && mousePressed==true && mouseX<x+w && mouseX>x){

if ((mouseY<=y+h+150) && (mouseY>=y-150)) {

p = h-(mouseY-y);

if (p<0) {

p=0;

}

else if (p>h) {

p=h;

}

}

}

}

}